Key takeaways

- AI-generated content is what an AI tool produces from a prompt: text, scenarios, question banks, drafts. AI-assisted instructional design is what a designer does with that content to turn it into training that teaches.

- What AI does well is generation. What it doesn't do is judgment about whether content should exist, what shape it should take, who needs it, and whether it actually teaches what it's supposed to.

- A useful AI workflow has two halves. The prompt half front-loads context, structure, constraints, tone, and objective. The review half checks output for objective alignment, factual risk, tone match, feasibility, and accessibility.

- Most failed AI training projects don't fail because the AI was bad. They fail because no one did the instructional design work. Generation got delivered as if it were a course.

- The expertise that hasn't gone extinct is design judgment. AI changes what an instructional designer does day to day, but the discipline matters more, not less, when content generation is cheap.

AI can generate training content. Most L&D leaders have seen the demos by now, and many have run a few prompts themselves. The output is impressive: a draft scenario, a question bank, a job aid that would have taken hours appears in seconds.

The harder question is what to do with it.

That’s where the difference between AI-generated content and AI-assisted instructional design lives. The terms sound similar. They describe different work. The first is what an AI tool produces from a prompt. The second is what an instructional designer (with or without AI in the workflow) does to turn that output into training that actually teaches.

This guide walks through what each one is, how they combine in a working AI-assisted workflow, and why the distinction matters more than the words make it sound, both for L&D buyers and for the people whose careers are built around the discipline.

The short answer

AI-generated content is the output of a tool. Instructional design is a discipline. They produce different things at different scales, and conflating them is what produces the AI training projects that fail.

AI-generated content is text, scenarios, question banks, summaries, or draft media that come out of an AI tool in response to a prompt. The work happens in seconds. The output looks polished. It often reads well.

AI-assisted instructional design is the much larger job around that output: deciding what should be taught and to whom, breaking the topic into objectives, choosing a structure, designing practice and assessment, sequencing the content, reviewing the AI output against the objectives, and shipping something a learner can actually use to do their job differently. AI sits inside that workflow. It doesn’t replace it.

What AI-generated content actually is

AI-generated content is what comes out of a tool when you give it a prompt. The category includes draft text, scenario descriptions, role-play scripts, question banks, summaries of source material, rewritten policy in plainer language, mock dialogue, image alternatives, and the kind of scaffolding documents (job aids, checklists, quick-reference guides) that L&D teams used to spend whole afternoons drafting from scratch.

The capability is real. The output is often good enough to use as a starting point. Occasionally it’s good enough to use with minor edits.

A few things AI generation does well that are worth naming:

- Speed. Five minutes of prompt-and-revise produces drafts that used to take an afternoon. The math on that is significant.

- Volume. Generating ten variants of the same scenario, or a hundred quiz questions for a topic, becomes practical when it wasn’t before.

- Range. The tools have been trained on enormous amounts of material. They can produce plausible drafts on subjects a single SME wouldn’t touch.

There are also limits, and the limits are categorical. They don’t get fixed by better prompting:

- It doesn’t decide whether something should be taught. It produces what you ask for. If what you ask for shouldn’t exist as training, it produces it anyway.

- It doesn’t know your audience. It can adopt a tone, but it can’t tell you whether your audience needs a five-minute job aid or a twenty-minute branched scenario.

- It doesn’t verify accuracy against your operating reality. The output might be plausibly correct in general but wrong about your equipment, your policy, your jurisdiction.

These aren’t bugs. They’re properties of generation as a task. The work that decides whether content should exist, who needs it, and whether the output is right for them: that’s instructional design.

What instructional design actually does

Instructional design decides what should be taught, in what form, to whom, with what practice, against what objective. The discipline is wider than course design. It runs from the question of whether training is even the right intervention through to whether the training that shipped actually changed behavior.

The work happens in steps that are mostly invisible from outside the function. A good instructional designer starts before any content exists. They talk to stakeholders and subject matter experts (SMEs) about what people actually need to do differently after the training. They identify the gap between current performance and target performance. They decide whether training is even the right intervention, or whether the problem is process, tooling, or accountability and training will produce nothing useful no matter how well it’s built.

If training is the answer, the next decisions are still upstream of any content:

- What does competence look like for this audience?

- What’s the smallest, sharpest version of the training that gets there?

- What modality fits the constraint (eLearning, job aid, scenario practice, instructor-led, mixed)?

- Where does practice need to live so behavior actually changes?

- What does assessment measure: completion or capability?

Once those decisions are made, content production starts. This is where AI generation lives in the workflow. The content might be drafted by an AI tool, by a human writer, by an SME, or by a combination. What matters is that the production is built to fit the design decisions that were already made.

After production comes review, integration, and iteration. Does the content meet the objectives? Does the practice produce the behavior change the design promised? Does the assessment measure something real, or just seat time?

The design discipline runs through the whole sequence. AI generation lives inside one part of it.

The AI-assisted workflow: how the two combine

AI assists instructional design when the design work is done first and the AI gets used to produce the content the design calls for. The order matters. Generate first and design later, and you end up shaping the design around whatever the AI happened to produce, which is exactly the inversion that produces unusable training.

A useful AI workflow has two halves. The first half front-loads what the AI needs to know before it generates anything. The second half checks the output for what the AI couldn’t know.

The front-loading half is built around six elements that should appear in any prompt of consequence:

- Inputs. The source material the AI should work from: your content, your style guide, your accuracy bounds, examples of what you want and what you don’t.

- Task. The specific deliverable you want the AI to produce: a draft, a rewrite, a scenario, a question bank, an alternative version of an existing piece.

- Structure. The format the output should take: sections, slides, a script, branching paths, a checklist.

- Constraints. What the output must include or avoid: length, complexity, banned terms, factual bounds, tone limits.

- Tone. The voice the output should adopt: formal, conversational, peer-to-peer, technical-but-readable, audience-specific.

- Objective. What the output is supposed to accomplish for the learner: recall, application, decision-making, behavior change.

A prompt missing any of these almost always produces output that has to be rewritten. Front-loading them turns minutes of revision into seconds of validation. The more you tell the AI about your design before it generates, the closer the output lands to something you can actually use.

What the human reviews for

The second half of the workflow is review. The AI is fast at producing output. It’s incapable of telling you whether the output fits the design.

A useful review checks the output against five things:

- Objective alignment. Does the output actually teach what the objective said it should? An AI can write a beautiful scenario about customer service that doesn’t develop any of the specific decision-making skills the training is supposed to build. Generation pleases the eye; alignment requires re-reading the objective and asking whether the output gets there.

- SME risk. Are there factual or technical claims in the output that need expert verification before this gets in front of a learner? AI is plausible. Plausible isn’t true. Anywhere the output asserts how a piece of equipment works, what a regulation requires, or how a process actually runs, an SME has to confirm or correct.

- Tone match. Does the output match your organization’s voice and the audience’s reading level? AI defaults to a generic professional register that often clashes with how your real audience talks and reads. The mismatch is subtle and erodes trust over time if it isn’t fixed.

- Feasibility. Can what the output describes actually be built, delivered, or executed in your real environment? AI doesn’t know what your authoring tool supports, what your LMS allows, or what timelines your team can hit.

- Accessibility. Does the output meet your accessibility standards? AI often produces content that reads well visually but breaks down for screen readers, color-blind learners, or non-native speakers. Accessibility review is its own discipline and can’t be skipped at the end.

A draft that clears these five checkpoints is closer to usable than one that doesn’t, regardless of how good the prompt was.

Why this distinction matters

Two consequences ride on the distinction.

The first is buyer-side. L&D leaders are being pitched AI-powered course creation tools that conflate generation with design. The marketing claim is that the tool produces a finished course. What the tool produces is content. The course requires the design work the tool doesn’t do, and the buyer ends up either paying twice (for the tool and for the rebuild) or shipping training that doesn’t teach.

The second is career-side. Instructional designers are being told their work is being automated away. The opposite is true. AI changes what an instructional designer does in any given hour, but the discipline matters more, not less, when content generation is cheap. When everyone can produce content fast, the value of knowing what to produce, in what form, against what objective, separates training that works from training that just exists.

The expertise that AI hasn’t extinguished is design judgment. The discipline is shifting toward more time on objectives, audience analysis, learning architecture, and review, and less time on first-draft writing. That’s not extinction. That’s a promotion.

A note on Neovation’s approach

We use AI tools daily at Neovation. Drafting, variant generation, comparison work, the rewriting tasks that used to consume hours: those have all gotten faster. What hasn’t changed is who owns the design. Our instructional designers run the front-loading and the review. The prompt anatomy and the five-checkpoint review lens are how the team integrates AI without letting it design by accident. For the discipline of instructional design itself, our piece on what training to standardize first covers one specific place AI is genuinely useful inside a designed workflow, and our guide to designing a curriculum covers the broader architecture work that sits above it.

If you’re evaluating an AI tool that promises full course creation, the question to ask isn’t whether it generates content well; it almost certainly does. The question is who’s making the design decisions: the buyer, the SME, the L&D team, or no one. If the answer is “no one,” the tool is producing content, not training. An internal team can run the design work and use AI for generation. A freelance instructional designer can do the same on smaller scopes. For projects where the workflow benefits from a full team, an outside partner like Neovation runs the design and the production together. If you’d like to discuss how AI fits into a project you’re scoping, request a quote or browse our case studies for examples.

Frequently asked questions

Can AI replace an instructional designer?

No, but it changes the job. AI is now better at first-draft generation than most humans are. What it doesn't do is decide what should be taught, design the structure of how it gets taught, or review whether the output meets the objective. Those are the design decisions, and they remain the instructional designer's work. The discipline is shifting away from drafting and toward design and review.

Is AI-generated training better or worse than traditional eLearning?

It depends entirely on whether instructional design happened. AI-generated content with no design behind it is usually worse: fluent but unfocused, polished but disconnected from any real objective. AI-assisted training where a designer ran the workflow can be as good or better than traditionally produced training, often faster and at lower cost.

What's the right way to use AI in eLearning development?

Treat it as a research and drafting assistant, not a decision-maker. The work that should stay human is the design: figuring out what the training is for, who it's for, what they need to do differently afterward, and whether what the AI produced actually serves that goal. The work AI does well is the production: drafting, varying, summarizing, rewriting. Use it for the second; don't ask it to do the first.

How do I tell whether an AI tool is doing instructional design or just generating content?

Ask which design decisions the tool makes versus the human. If the tool decides what objectives the training should target, what audience it's for, what modality fits, and what assessment measures real capability, it's making design claims that are worth scrutinizing carefully. If the tool generates content based on inputs the human provides (objectives, audience, modality, structure), it's generation, not design, which is honest and useful within its scope.

Are AI-assisted courses just lower-quality custom courses?

Not inherently. The quality depends on the design work that runs around the AI. A custom course built without instructional design is also low-quality, regardless of whether AI was involved. The question isn't 'was AI used?' but 'did instructional design happen?' If the answer is yes, the tools used to produce the content matter much less than the design decisions that shaped the production.

How should I evaluate an AI eLearning vendor?

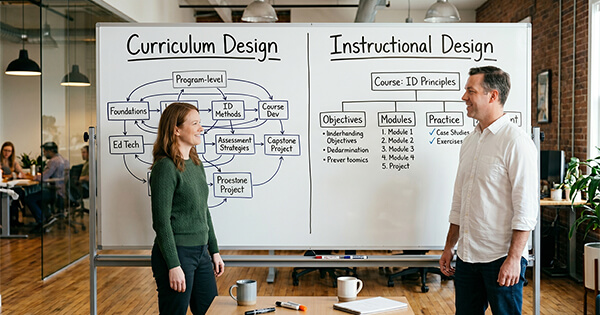

Look at their workflow, not their tools. Ask how they decide what should be taught, who reviews the AI output and against what criteria, who owns the design decisions, and what happens when the AI produces something plausibly wrong. Vendors who treat AI as a content generator inside an instructional design process produce different work from vendors who treat AI as the entire process. Our guide to instructional design vs curriculum design covers some related distinctions in vendor evaluation.